How to Choose the Best Proxy for Web Scraping (2026 Guide)

Web scraping is the process of extracting publicly available data from websites using software or scripts. In the early days of the internet, this was quite simple with most sites delivering static HTML with little regard for who (or what) was making the request.

However, many companies now want to protect their public data, and modern websites often deploy multi-layered defences, including IP reputation analysis, request behaviour monitoring, and browser fingerprinting, to detect and restrict scraping.

Using reliable proxies in scraping workflows is one of the key strategies for reducing detection risk and bypassing anti-bot measures. In this guide, we help you find the right proxy provider and explain how proxies support web scraping at scale.

Best Proxies for Web Scraping

Choosing the best proxies for web scraping starts with selecting an appropriate provider and proxy type based on factors such as detectability, speed, and cost, while also keeping the security level of your scraping target in mind. You don’t want to overspend on an expensive proxy solution if you don’t have to.

Residential Proxies

Residential proxies are ideal for scraping, balancing anonymity and performance. These IPs are issued by ISPs to real users, making them the least susceptible to blocks due to higher trust.

These proxies are comparatively expensive due to the higher levels of trust and IP sourcing complexity. However, its speed can be slightly slower than others, especially datacenter IPs, because of real-world bottlenecks.

Developers often deploy residential proxies to scrape high-risk targets, such as e-commerce sites and social media platforms.

Datacenter Proxies

Datacenter proxies are the fastest and cheapest of the lot. Developers generally purchase multiple datacenter IPs at once for low-risk bulk work.

These IPs originate from cloud service providers and web hosting companies. This helps anti-bot systems to identify them as such and impose bans to limit abuse.

Since they are prone to bans, datacenter IPs are used for scraping targets having the least powerful detection algorithms in place.

ISP Proxies

ISP (static residential) proxies often have higher trust than datacenter IPs and stability comparable to residential IPs. They are assigned by ISPs but hosted in datacenters, making them a perfect hybrid.

They are the best when you need session continuity throughout a scraping workflow, as ISP proxies retain IP addresses assigned to them at subscriptions. These are generally billed per IP, similar to datacenter IPs. And while providers advertise unlimited bandwidth, fair usage limits generally apply.

These proxies are a good fit for scraping high-security targets, such as major e-retailers and social media platforms. However, they can still be identifiable depending on the provider and IP history.

Mobile Proxies

Mobile proxies are the stealthiest of all the proxy types. This is because these IPs are allotted to real cellular devices and are often shared among multiple users, making detection extremely difficult.

On the downside, performance can be unreliable due to factors such as connection strength and the number of users in a given location.

Developers generally reserve using mobile proxies for ultra-sensitive targets and where session continuity isn’t an absolute requirement. Besides, one should note that mobile proxies are the most expensive among all.

Top Proxy Providers for Web Scraping

The following table summarizes the individual proxy sections for comparing providers based on features, free trial, price, and their USPs.

| Proxy Provider | Features | Free Trial | Price | USP |

|---|---|---|---|---|

| Ping Proxies | 100+ Gbps capacity; 99.991% historical uptime; HTTP & SOCKS5; 40+ endpoints; 150+ ISP subnets; REST API; unlimited concurrent sessions; advanced geo-targeting | 1 GB free residential bandwidth | $1.75/GB (residential); $2.25/IP (ISP); $1.4/IP (datacenter) | Smartpath backed bandwidth savings, ACL rules, and real-time network debugging. |

| Webshare | 99.97% uptime; HTTPS & SOCKS5; REST API; Chrome extension; multiple proxy types including static residential and dedicated proxies | 10 proxies free (no card required) | $3.5/GB (residential); $6/month (static residential); 10 DC proxies free | Free entry plan with no credit card |

| Bright Data | 99.99% uptime; scraper APIs; browser API with anti-captcha; dataset marketplace; AI & automation integrations; GDPR/CCPA compliant; ISO 27001 certified | Free proxies (no card required) | $4/GB (residential); $18/month (ISP); $14/month (datacenter); $8/GB (mobile) | Enterprise-grade ecosystem combining proxies, APIs, and compliance |

| Oxylabs | 99%+ success rate; sub-second response time; web scraper API; anti-bot headless browser; AI Studio; 30+ integrations; HTTPS & SOCKS5 | 7-day trial (companies) | $4GB/month (residential); 5 IPs free (datacenter); $16/month (ISP); $5.4/GB (mobile) | Enterprise-focused scraping infrastructure with AI-assisted automation |

| SOAX | 99.95% success rate; sub-second response; HTTPS, SOCKS5, UDP, QUIC; Web Data API with anti-WAF; sticky sessions; browser extension | 400MB for 3 days at $1.99 | $90/month starter (25GB residential/mobile; 145GB datacenter) | Strong residential/mobile focus with advanced protocol support |

Want to learn about each proxy provider in detail? Here's a quick breakdown.

Ping Proxies

Ping Proxies offers exciting features for web scraping, including Smartpath (automatic bandwidth optimization), live network activity (real-time request debugging), Proxy User ACL rules (control proxy access), and live pool indicators (real-time IP availability).

It offers a high-quality IP network with a capacity exceeding 100 Gbps and an excellent 99.991% historical uptime. Customers show their trust with a 4.7/5 Trustpilot score and 150+ five-star reviews.

With 35 million IPs across 195+ countries, it’s a decent geographical spread for choosing worldwide scraping targets. Moreover, the dashboard is straightforward to use and allows targeting specific countries, cities, ZIP codes, and ASNs with ease.

The network supports HTTP, HTTPS, and SOCKS5 protocols. You also get access to 40+ REST API endpoints and 250+ ISP subnets. It offers residential, datacenter (shared/dedicated), and ISP proxy networks. All subscriptions include unlimited concurrent sessions, geo-targeting, 99.9% uptime guarantee, and API access.

Pricing: Plans start at $1.75. You can also get 1GB of free residential bandwidth to get started.

Webshare

Webshare has a 500K+ datacenter/ISP and an over 80 million residential IP network covering 195+ countries. It offers HTTPS/SOCKS5 support, a REST API, and a Chrome extension (for easy connections).

It has multiple proxy types, including static residential, proxy servers, private proxy servers, dedicated proxy servers, private static residential, etc. In addition, you can use its YouTube proxies to scrape large amounts of video and AI data.

Pricing: Webshare offers a free trial with 10 proxies. Paid tiers start at $2.99/month.

Bright Data

Bright Data runs one of the largest proxy networks in the entire industry. It has pre-built scraper APIs, browser API (with built-in anti-captcha), and a curated data marketplace, making it a powerful web scraping ecosystem.

It supports industry-standard HTTPS and SOCKS5 protocols. You get all major proxy types, including residential, datacenter, ISP, and mobile proxies, with geo-location targeting.

Also, mobile and residential proxies report a 99.95% success rate, while ISP proxies allow you to retain the same IPs indefinitely for session-sensitive scraping workflows.

Pricing: You can test Bright Data with a free trial. Afterwards, its pay-as-you-go plan bills $4 per GB.

Oxylabs

Oxylabs is another major player delivering enterprise-scale proxy performance with a 99%+ success rate and sub-second average response time, making it suitable for high-volume scraping operations. It offers a web scraper API and an anti-bot headless browser designed to handle protected targets.

Oxylabs supports HTTPS and SOCKS5 protocols. The platform offers residential, datacenter (shared/dedicated), ISP (shared/dedicated), and mobile proxies with geo-targeting and unlimited concurrent sessions. Its datacenter IPs report a 99.9% success rate with 0.25 second response times, while ISP proxies put no limit on session duration.

Pricing: Oxylabs free trial presents five free IPs, paid plans start at $5.4/GB.

SOAX

SOAX’s specialty is a sizeable residential proxy pool with a 99.95% success rate and sub-second response times. It also features a Web Data API with built-in anti-captcha and anti-WAF measures, IP rotation, and dynamic content rendering. You get support for HTTPS, SOCKS5, UDP, and QUIC protocols with its residential, mobile, and datacenter (shared/dedicated) proxy pools.

Its residential proxies provide unlimited concurrent sessions, automatic IP rotation, city-level geo-targeting, and customizable sticky sessions. Likewise, mobile proxies allow geo-targeting, configurable session durations, and IP persistence for up to 60 minutes.

Pricing: 400MB for 3 days at $1.99. Afterwards, paid plans start at $90/month.

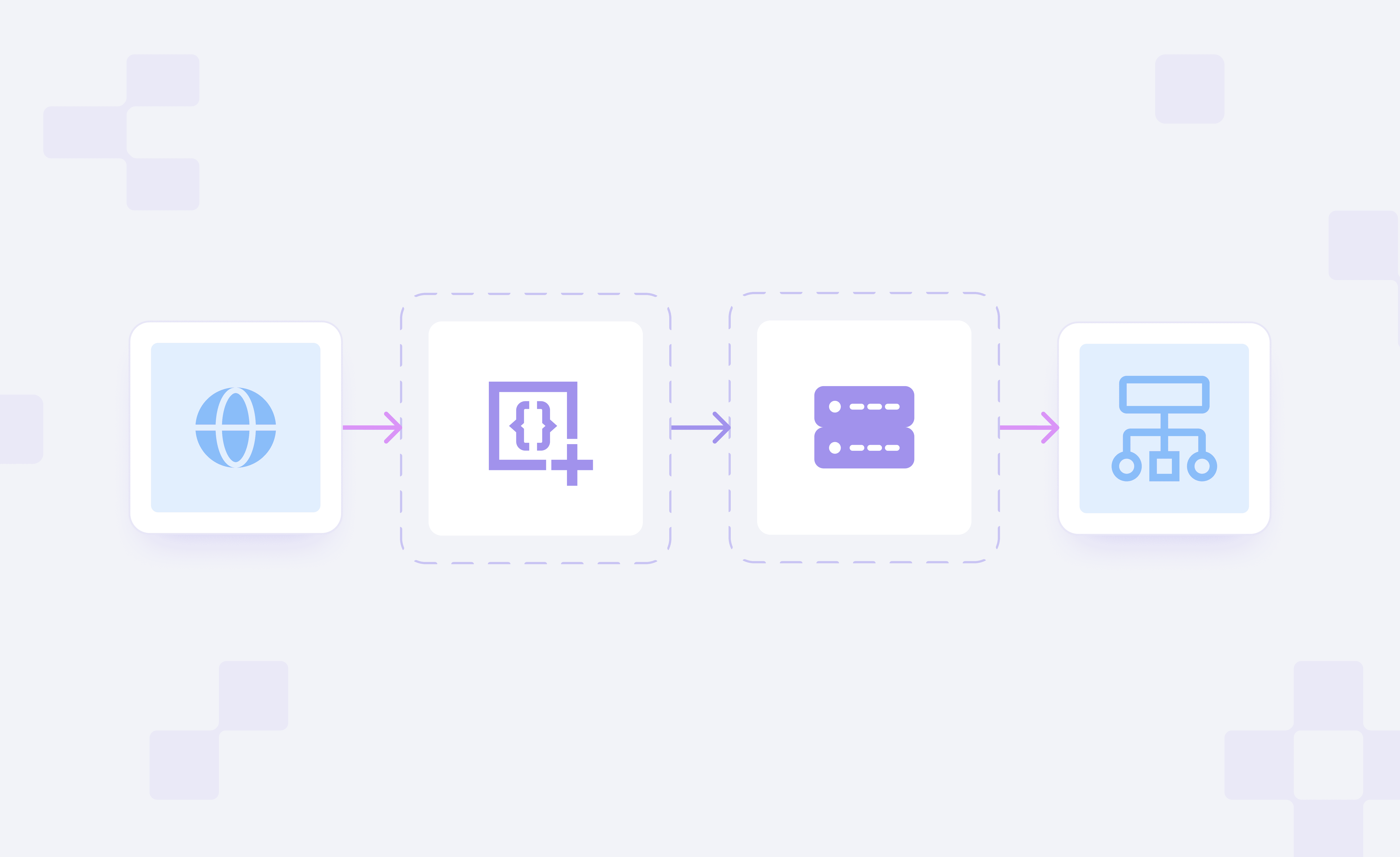

Why Proxies for Web Scraping?

Trying to scrape a large amount of data with a single IP address from a sensitive target is practically impossible. You’ll get blocked quickly because of various factors, such as IP location mismatch, too many requests, or suspicious user behaviour.

The following sections detail the limitations scrapers might face without a good proxy.

IP Blocks, Rate Limits, & Bans

It’s common to safeguard a web server by restricting repeated requests from a specific IP address within a short time frame to prevent spam, scraping, and cyberattacks. When in action, this can result in an error like “HTTP 429 Too Many Requests.”

Based on the web application firewall, the granular access controls can be applied to the IP, referrer, host, country, etc. In addition, IP reputation checks, traffic behavioural analysis, fingerprinting, etc., can also trigger bans.

Therefore, using a proxy in conjunction with anti-detect browsers presents the best-case scenario for scraping sensitive websites.

Geo-Restrictions & Location Targeting

You might have encountered the “This content is currently not available in your region” error when trying to access a website (such as YouTube & Netflix) with location-based censoring enabled. It is a classic mechanism to deny access to users of a certain location based on their IP address.

In such cases, not only does a proxy act as a web unlocker, but it also allows you to scrape “local” data, which is useful in competitor analysis and market research.

Scaling and Requests Management

Scraping small volumes of data with a single IP is often synonymous with real user behaviour and isn’t flagged. However, it makes detection algorithms suspicious when they observe repeated attempts to scrape large catalogs of data from a single or multiple IP addresses without proper measures in place.

Manually, it’s extremely difficult, if not downright impossible, to manage a huge pool of IP addresses and configure rotation to circumvent bans. With proxies, however, one can enable IP rotation and automatically replace blocked IPs with fresh ones. For instance, rotating proxies becomes essential when scraping large image datasets to prevent rate limiting and IP bans.

What are Web Scraping Tools?

There are multiple scraping tools to start with. But it depends on your programming skills, local hardware, and project demand to determine which one fits your bill.

The following sections are aimed at beginners to help them get off to the right start.

Browser Extensions

Browser extensions are the easiest way to get started with no-code scraping.

For ex., Instant Data Scraper (a Chrome extension) enables AI-powered data analysis to inspect a website's HTML structure and list the data available for extraction. This tool also supports automatic navigation, infinite scrolling, and data export to spreadsheets or CSV files.

However, since these extensions run within the browser, they aren’t a great fit for massive datasets and may feel slow during heavy scraping tasks.

Local Scraping Software

These are local, PC-installed scraping tools that serve as a bridge between scraping browser extensions and high-level programming frameworks.

One such example is ParseHub, where you can simply open a website, select data to scrape, and download results in JSON and Excel. It doesn’t require coding and can handle infinite scrolling and pop-ups.

While it's good for entry to mid-level scraping projects, the processing power of the local machine can be a limiting factor.

Cloud-Based Scraping Platforms

Cloud Scrapers, such as Web Scraper, take it to the next level with managed scraping on remote servers. They handle infrastructure and proxies behind the scenes, while you manage projects via a UI or API.

The upside is easy scalability and a hands-off approach to scraping. However, like any cloud service, project costs can easily shoot out of the roof.

Custom Scripts and Frameworks

Developers often seek ultimate flexibility in managing their scraping operations.

Web scraping tools like Beautiful Soup and Scrapy cater to such demanding use cases, where one can write scraping scripts that crawl websites and extract static data in JSON, CSV, or XML. Scrapy also offers multiple deployment options, including the cloud and your own servers.

In addition, there are browser automation tools such as Playwright, Puppeteer, and Selenium that can act like real web browsers to scrape JavaScript-heavy pages.

The only trade-off with these powerful scraping tools is that you need to have programming skills.

Choosing a Reliable Proxy Provider for Web Scraping

The following sections present multiple factors (such as scraping objectives, IP pools, rotation, etc.) to consider when shopping for the ideal proxy provider.

Align Proxy Type With Your Scraping Goals

This depends on the target's risk profile and the scraping volume. For ex., large-scale data collection from e-commerce and social media platforms is best done with residential proxies, whereas high-speed, low-risk tasks are suited for datacenter proxies.

Likewise, budget is a critical constraint, as scraping with mobile/residential proxies costs significantly more, especially when the scraping volume is high. This can be a complete waste in case the target has a low level of anti-bot defences.

Evaluate IP Pool Size and Geographic Coverage

You must ensure the proxy provider has a significant pool strength in the geographical region of your interest. This gives you access to hundreds to thousands of proxies for a specific location (important for rotation) to bypass geo-restrictions and spread the request load evenly.

A large pool reduces the risk of IP reuse and evades IP-based rate limits to ultimately avoid bans.

Assess Rotation Controls and Session Management

Look for providers offering flexible IP rotation options: per request, fixed interval, and sticky. Again, this should match your scraping target and workflow needs.

For ex., sticky IPs help cover login-based workflows and the websites that track users across login-to-logout. Similarly, IP rotation is for evading anti-bot systems by mimicking real user behaviour.

Confirm Transparent Pricing and Clear Usage Limits

It’s best to confirm support for a clear pricing structure (per-GB or per-IP) and verify that with the provider’s pricing sections.

Equally important is checking about their fair usage data policy. Data caps are usually mentioned in the terms of use or as an FAQ. If unavailable, you should inquire with customer support for bandwidth limits and overage charges.

Check IP Sourcing Transparency and Ethical Standards

Ethical sourcing means that every residential/mobile IP address is sourced explicitly with user consent (generally in exchange of monetray rewards), and that participants can opt out at any time. This framework ensures that users fully understand that third parties use their bandwidth and that their devices will function as exit nodes.

You can ask for documented proof of the consent chain, and the providers should be able to produce an auditable trail linking IPs to their legitimate users.

Review Support Quality and Industry Reputation

Depending on whether you have an in-house developer team, you might need 24/7 support. Better still is support via your preferred channel (call, email, or chat) in your native language.

Additionally, one should check platforms such as G2, Trustpilot, and Reddit for any obvious red flags.

Evaluate Performance Before Scaling

Despite the providers' claims, it’s best to take the trial or subscribe to the base tier to evaluate the use case fit yourself. Use this trial run to test the proxy's performance against your target site and to check support via demo queries before committing to larger subscriptions.